WHY THIS MATTERS IN BRIEF

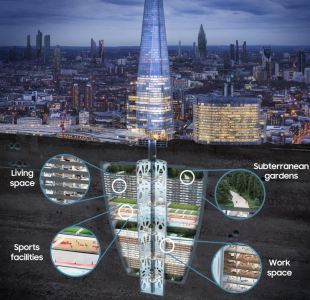

Predicting the future, let alone the deep future, is very difficult but that doesn’t stop people from trying, so sit back and enjoy the vision of 2069 bought by yours truly and Samsung as they celebrate their 50th anniversary and prepare for the next 50 years and open their new Kings Cross (KX) experience campus.

Interested in the Exponential Future? Connect, download a free E-Book, watch a keynote, or browse my blog.

Interested in the Exponential Future? Connect, download a free E-Book, watch a keynote, or browse my blog.

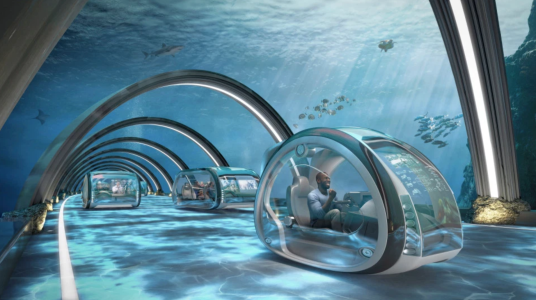

What will we be doing for fun in 2069? My prediction is that the future will still be comprised of many of the same kind of pastimes that entertain us today, such as watching and participating in sport, enjoying concerts, games, and movies, as well as base jumping off of the cliffs on Neptune – but EVERYTHING will be more intense, more vivid, and more individually tailored. And some of it will blow your mind – literally.

Imagine, for starters, watching Quidditch-style four-dimensional sports matches in a massive stadium, with crowds cheering on players who are astride hover boards or shooting upwards powered by jet packs. Are you actually there? It won’t matter. You could be anywhere in the world, because you will feel as if you are genuinely present. The digitally generated immersive worlds of the future, and Virtual Reality, will be that good.

Watching sport will become a full-on neural assault. You will feel it when the future equivalent of Ellen White is tackled by a future Megan Rapinoe in the 2069 Women’s World Cup. It will be as if you are actually on the pitch alongside the players – actually part of the team. Meanwhile Artificial Intelligence and a new generation of Creative Machines will let you design any virtual game you like using just your voice or thoughts – no programming or design experience needed – and become the sports star yourself, whether it’s base jumping off the cliffs on Neptune or skydiving through the swirling acidic mists of Venus. You could choose to play a virtual game of soccer against the stars of the past, David Beckham or George Best, or quidditch alongside Harry Potter – you choose, it’s your experience and world. The norm will be that if you can imagine it, you can create and play it.

Courtesy: Samsung. Download the report

Effectively the distinction between ‘real’ sport and computer gaming will be eroded, and you won’t be able to tell the difference – the graphics will be that good, they’ll be beamed into your brain, and haptics and sensor technology will fool all five of your senses into thinking it’s real. The future will offer ever more immersive and convincing virtual reality. Haptic kits already exist, which allow you to experience sensations, via armbands, suits and vests, as well as 360 degree vision via your headset. This kit will become ever more refined, those clunky headsets will disappear, replaced by nano-tech Metalenses and eventually neural interface technologies, and instead there will be affordable haptic full body suits available both for home use and in arcades, and even haptic technology and a wide range of sensors embedded into your everyday clothing – which themselves will be printed on demand using a wide range of new manufacturing technologies such as Holographic 3D printing, and more.

For gamers who cannot afford to equip themselves with the full rig, future amusement arcades will use neural interfaces to hook people directly into the games telepathically. And, if that’s not your thing, then rather than standing at an arcade machine, players could just walk into Holodecks, a type of room that uses a range of different technologies, including parallax screens, to create a simulated “virtual” environment in the real world that surrounds the player with digitally simulated sounds, sights, scents, and experiences. In a jungle warfare game, for instance, you would able to feel the moisture dripping onto your skin, hear the hiss of a snake in the undergrowth, the throb of an approaching helicopter, and smell the perfume of exotic flowers mingled with the stink of your comrades’ fear – and not a virtual reality headset, or a neural interface, in sight.

Virtual reality and other new tech will also help ageing sports stars like Beckham and Roger Federer prolong their professional careers. They won’t need a human sports coach to lift their game in the future. Real and Virtual Reality training combined with Artificial Intelligence coaches, that are enabled by Machine Vision and data, will allow them to try out an infinite number of different plays, while AI will be used to analyse opponents’ game play, skills and style, and then coach players in the best strategies to copy it and counter it. In vivo gene editing will also let sports people genetically enhance and augment their physiology to give themselves better reflexes, eyesight, speed and much more. Neuro-prosthetics and neuro-stimulation devices will train their brains so their bodies react faster in order to improve their performance, and athletes will have, by today’s standards, superabilities. Disabled people will also be able to use these same technologies too so that disabilities are no longer seen as an obstacle to performing at the very highest levels – or even exceed them.

Courtesy: Samsung

But not all sporting contests will be human. Inevitably drones and robots will be playing sports and there could be any number of new ones invented, including space races through the solar system, dodging collisions in the asteroid belt, in the same way that drones today twist and turn through disused warehouses. Given current controversies over allowing disabled athletes with specially designed prostheses to compete alongside ablebodied athletes, imagine the ethical nightmare of determining equitable rules governing future sports contests involving cyborgs, genetically enhanced or physically modified athletes and even animals, and interestingly, CRISPR gene editing tools have already been tried on living patients to eliminate certain genetically inherited diseases, and in 2016 the International Olympic Committee (IOC) started testing for “genetically modified athletes,” a type of “cheating” they refer to as Gene Doping.

As for cinema or theatre, the concept of a screen or a stage will seem quaintly old-fashioned by 2069. We are accustomed to a present-day world where everything is designed and created by people. As AI grows more sophisticated, that will change – dramatically. Increasingly the creators of both artefacts and content will not be human. Artists, authors, bloggers, film makers, musicians, and writers will effectively be redundant. AI will provide the content you desire at any given moment, and can even adapt the storyline on the fly if, using machine vision, sensors, or even your home WiFi, it senses you are getting bored, in order to get you re-engaged with it.

There will be no need to browse Netflix for the kind of show that you want to watch. This ‘procedural content’ will be beamed directly into people’s brains using non-invasive neural interfaces – why sit in front of a big screen when you could put customers into the movie and then play that movie in their heads? Neural interfaces connected to AI, the web and other “hive minds” in the cloud, will gauge your mood, assess that you want a laugh with a witty comedy, or perhaps a good weep over a romantic movie, that you’d enjoy it most if it were set in a familiar location and that it featured a character who looked exactly like your first girlfriend – and there it will be, playing in your head.

This frankly all sounds out there, but organisations are already experimenting with brain machine interfaces and opto-electronic devices that use light to affect and modulate the human brain’s synapses to edit memories, and download and upload information, and even memories. People will also be able to embed microchips in their brains, using autonomous robotic surgeons, that are capable of taking a digital input and converting it into a bio-electronic signal that the brain can understand in order to gain new mental functionalities such as enhanced memory and recall, for starters, and this embedded technology could offer faster data transfer rates than some of the alternative non-invasive technologies couldn’t, but which type of neural interface, invasive or non-invasive, that people choose would be down to them.

As for Glastonbury and other big rock concerts – well, for diehard traditionalists who like to get muddy, it may be that Michael Eavis’s great-great-granddaughter will still open Worthy Farm every June for real humans to headline on the Pyramid Stage. Most of us will be linked in to such events, wherever they happen in the world, via neural links and haptics. But history shows that, from the Monkees and the Bay City Rollers to The Spice Girls and Take That, the music industry is obsessed with creating the perfect combination of looks, talent and malleability in its stars. Unfortunately, humans are unpredictable and eventually they fall out with each other or think they can go solo. At most future rock concerts, the stars will be digital avatars, the music composed and played by AI.

One last idea to boggle over. People will connect, talk and communicate with one another on social media networks telepathically, again using so called “internet scale” neural interfaces, and will be able to access and share “hive minds,” in the same way some robots “share minds,” using AI and the cloud, today. As bizarre as this sounds, the former technology is already emerging and Facebook’s CEO, Mark Zuckerberg, has said he wants “…to turn Facebook into the world’s first telepathic network,” and his team have already made huge progress. However, it will take decades before anyone manages to sort out the necessary regulatory and ethical approvals to allow this sort of technology to get to market – just imagine the privacy settings your account (or brain) would need!

Media Links: AOL, BBC, Daily Mail, ITV, New Atlas, The Sun, The Mirror, The Telegraph, Yahoo

Source: Samsung