WHY THIS MATTERS IN BRIEF

As healthcare and robotics merge, from Robo-Surgeons to Nanobots, we need to find new ways to help them navigate to where they’re needed.

Interested in the Exponential Future? Connect, download a free E-Book, watch a keynote, or browse my blog.

Interested in the Exponential Future? Connect, download a free E-Book, watch a keynote, or browse my blog.

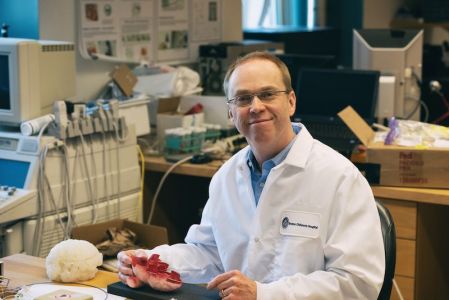

Surgeons have used robots operated by joysticks for more than a decade now, with recent advances in autonomous dentist, general, and neurosurgical robots, and even in 5G letting them use those same foundational robot technologies to perform remote surgeries on patients in buildings that, for now, are tens of miles away from the actual surgeon. And recently, and perhaps even more impressively and sci fi sounding, teams in China managed to steer swarms of surgical nanobots through mazes of simulated blood vessels using nothing more than magnetism. All that said though, Pierre Dupont PhD, and Chief of Paediatric Cardiac Bioengineering at Boston Children’s Hospital, says that to his knowledge, his team is the first in the world to have created an autonomous surgical robot that’s capable of navigating itself, without any human help, to a desired destination inside the human body – in much the same way that a self-driving car finds its own way to its destination.

Dupont, who authored the paper on their work, envisions autonomous robots like these assisting surgeons in complex operations, reducing fatigue and freeing surgeons to focus on the most difficult manoeuvres, which in turn will help improve patient outcomes.

“The right way to think about this is through the analogy of a fighter pilot and a fighter plane,” he says. “The fighter plane takes on the routine tasks like flying the plane, so the pilot can focus on the higher-level tasks of the mission.”

In the latest example of robotic wizardry the team’s robotic catheter navigated itself through a patient’s body using an optical touch sensor developed in Dupont’s lab that was “informed by a map of the cardiac anatomy and preoperative scans.” The touch sensor uses Artificial Intelligence (AI) and image processing algorithms to enable the catheter to figure out where it is in the heart and where it needs to go.

For the demo, the team performed a highly technically demanding procedure known as paravalvular aortic leak closure, which repairs replacement heart valves that have begun leaking around the edges. Once the robotic catheter reached the leak location, an experienced cardiac surgeon then took control and inserted a plug to close the leak.

In repeated trials the robotic catheter successfully navigated to heart valve leaks in roughly the same amount of time as the surgeon who was using either a traditional hand tool or a joystick controlled robot.

Using a navigational technique called “Wall following,” the robotic catheter’s optical touch sensor sampled its environment at regular intervals, in much the way insects’ antennae or the whiskers of rodents sample their surroundings to build mental maps of unfamiliar, dark environments. The sensor told the catheter whether it was touching blood, the heart wall or a valve (through images from a tip-mounted camera) and how hard it was pressing (to keep it from damaging the beating heart).

Data from preoperative imaging and machine learning algorithms helped the catheter interpret visual features. In this way, the robotic catheter advanced by itself from the base of the heart, along the wall of the left ventricle and around the leaky valve until it reached the location of the leak.

“The algorithms help the catheter figure out what type of tissue it’s touching, where it is in the heart, and how it should choose its next motion to get where we want it to go,” Dupont explains.

Though the autonomous robot took a bit longer than the surgeon to reach the leaky valve, its wall-following technique meant that it took the longest path.

“The navigation time was statistically equivalent for all, which we think is pretty impressive given that you’re inside the blood-filled beating heart and trying to reach a millimeter-scale target on a specific valve,” says Dupont.

He adds that the robot’s ability to visualize and sense its environment could eliminate the need for fluoroscopic imaging, which is typically used in this operation and exposes patients to ionizing radiation.

Dupont says the project was the most challenging of his career. While the cardiac surgical fellow, who performed the operations on pigs, was able to relax while the robot found the valve leaks, the project was taxing for Dupont’s engineering fellows, who sometimes had to reprogram the robot mid-operation as they perfected the technology.

“I remember times when the engineers on our team walked out of the OR completely exhausted, but we managed to pull it off,” says Dupont. “Now that we’ve demonstrated autonomous in vivo [robot] navigation, much more is possible.”

Some cardiac interventionalists who are aware of Dupont’s work envision using robots for more than navigation, performing routine heart-mapping tasks, for example. Some envision this technology providing guidance during particularly difficult or unusual cases or assisting in operations in parts of the world that lack highly experienced surgeons.

As the US Food and Drug Administration begins to develop its own regulatory framework for AI enabled devices, Dupont envisions the possibility of autonomous surgical robots all over the world pooling their data to continuously improve performance over time using the equivalent of a Hive Mind, or “Machine Learning and communication in the cloud” in plain English, which is something I’ve discussed before, that’s already being used to help robots learn from one another at scale.

“This would not only level the playing field, it would raise it,” says Dupont. “Every clinician in the world would be operating at a level of skill and experience equivalent to the best in their field. This has always been the promise of medical robots. And autonomy may be what gets us there.”

Source: Boston Children’s Hospital